Video Generation

install diffuser from https://huggingface.co/docs/diffusers/en/installation

import torch

from diffusers import DiffusionPipeline

from diffusers.utils import export_to_video

pipe = DiffusionPipeline.from_pretrained("damo-vilab/text-to-video-ms-1.7b", torch_dtype=torch.float16, variant="fp16")

pipe = pipe.to("cuda")

prompt = "<----------->"

video_frames = pipe(prompt).frames[0]

video_path = export_to_video(video_frames)

video_path

Video Evaluation

Install with pip

pip install vbench

Install with git clone

git clone https://github.com/Vchitect/VBench.git

pip install -r VBench/requirements.txt

pip install VBench

Evaluate Your Own Videos

python evaluate.py \

--dimension $DIMENSION \

--videos_path /path/to/folder_or_video/ \

--mode=custom_input

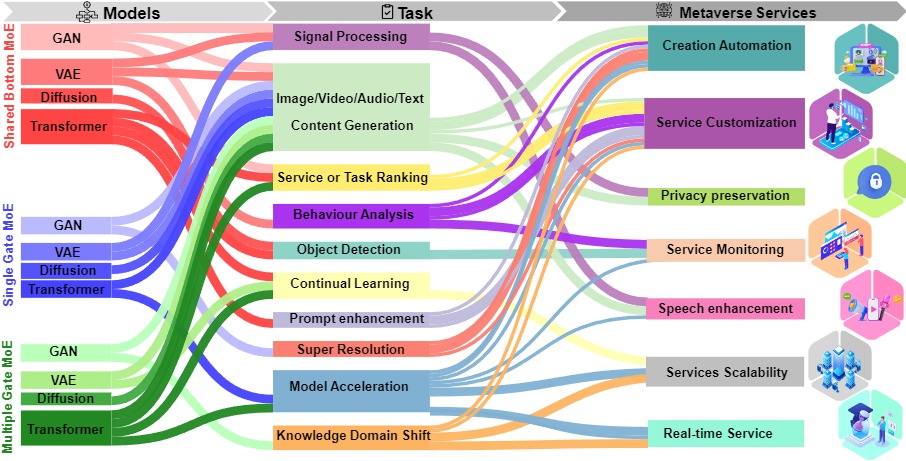

MOEGAI-metaverse Applications

This graph showcases various models and tasks, each cited with relevant research papers. Below, find detailed information about each model and task, accompanied by their respective Refs.

GAN

Shared Bottom MoE

Task: Data Augmentation - Object Detection. Ref: Data Augmentation for Intelligent Mechanical Fault Diagnosis Based on Local Shared Multiple-Generator GAN

Task: Conetent Generation - Image. Ref: MCL-GAN: Generative Adversarial Networkswith Multiple Specialized Discriminators

Single Gate MoE

- Task: Conetent Generation - Image. Ref: Megan: Mixture of experts of generative adversarial networks for multimodal image generation

Multiple Gate MoE

- Task: Speech Enhancement; Conetent Generation - Audio. Ref: Perceptual Loss Function for Speech Enhancement Based on Generative Adversarial Learning

VAE

Shared Bottom MoE

- Task: Continual Learning. Ref: Mixture-of-Variational-Experts for Continual Learning

Single Gate MoE

- Task: Behaviour Analysis - Anomaly Detection. Ref: Mixture of experts with convolutional and variational autoencoders for anomaly detection

Multiple Gate MoE

- Task: Cross Modality Content Generation. Ref: Variational Mixture-of-Experts Autoencoders for Multi-Modal Deep Generative Models

Diffusion

Shared Bottom MoE

- Task: Conetent Generation - Image. Ref: Ernie-vilg 2.0: Improving text-to-image diffusion model with knowledge-enhanced mixture-of-denoising-experts

Single Gate MoE

- Task: Super Resolution. Ref: Image super-resolution via latent diffusion: A sampling-space mixture of experts and frequency-augmented decoder approach

Multiple Gate MoE

- Task: Conetent Generation - Image. Ref: RAPHAEL: Text-to-Image Generation via Large Mixture of Diffusion Paths

Transformer

Shared Bottom MoE

- Task: Knowledge Domain Shift; Prompt enhancement. Ref: Diversifying content generation for commonsense reasoning with mixture of knowledge graph experts

Single Gate MoE

Task: Model Acceleration. Ref: DeepSpeed-MoE: Advancing Mixture-of-Experts Inference and Training to Power Next-Generation AI Scale

Task: Conetent Generation - Image. Ref: STABLEMOE: Stable Routing Strategy for Mixture of Experts

Multiple Gate MoE

Task: Model Acceleration; Conetent Generation - Image. Ref: M³ViT: Mixture-of-Experts Vision Transformer for Efficient Multi-task Learning with Model-Accelerator Co-design

Task: Image Classification. Ref: Scaling Vision with Sparse Mixture of Experts

📚 Cite Our Work

If our work aids your research, please cite our work:

@misc{liu2024fusion,

title={Fusion of Mixture of Experts and Generative Artificial Intelligence in Mobile Edge Metaverse},

author={Guangyuan Liu and Hongyang Du and Dusit Niyato and Jiawen Kang and Zehui Xiong and Abbas Jamalipour and Shiwen Mao and Dong In Kim},

year={2024},

eprint={2404.03321},

archivePrefix={arXiv}

}